Its hardware memory structure is simple and efficient. And it is not always successful.Ī scratchpad moves the burden of managing fast accesses to software. The magic of caches is that this cleverness has been hidden almost entirely from software.īut this cleverness costs energy. Decades of hardware research has found clever ways to determine what data to keep in the cache, how to find it, and when to throw it out. While most readers will not face the last choice, it is important for saving time and energy in the devices we love by keeping frequently-used information close at hand.Ĭaches are the workhorse of modern computers, feeding the processor with data about 100X faster than main memory. Full disclosure: He had the pleasure of working with one of the authors of the discussed paper- Sarita Adve-on her 1993 Ph.D. Hill of the University of Wisconsin-Madison. The following is a special contribution to this blog by CCC Executive Council Member Mark D. PROGRAMMING LANGUAGES / COMPILERS / SOFTWARE ENGINEERING.HUMAN-COMPUTER INTERACTION / GRAPHICS / VISUALIZATION.DATABASES / INFORMATICS / DATA SCIENCE / HPC.RFP – Creating Visions for Computing Research.CS for Social Good White Paper Competition.Lastly, our method is able to minimize the profile dependence issues which plague all similar allocation methods through careful analysis of static and dynamic profile information. Significant savings in runtime and energy across a large number of benchmarks were also observed when compared against cache memory organizations, showing our method's success under constrained SRAM sizes when dealing with dynamic data. When compared to placing all dynamic data variables in DRAM and only static data in scratch-pad, our results show that our method reduces the average runtime of our benchmarks by 22.3%, and the average power consumption by 26.7%, for the same size of scratch-pad fixed at 5% of total data size. With our method, code, global, stack and heap variables can share the same scratch-pad. Runtime methods based on software caching can place data in scratch-pad, but because of their high overheads from software address translation, they have not been successful, especially for dynamic data.In this thesis we present a dynamic yet compiler-directed allocation method for dynamic data that for the first time, (i) is able to place a portion of the dynamic data in scratch-pad (ii) has no software-caching tags (iii) requires no run-time per-access extra address translation and (iv) is able to move dynamic data back and forth between scratch-pad and DRAM to better track the program's locality characteristics. Existing compiler methods for allocating data to scratch-pad are able to place only code, global and stack data (static data) in scratch-pad memory heap and recursive-function objects (dynamic data) are allocated entirely in DRAM, resulting in poor performance for these dynamic data types. Dynamic data refers to all objects allocated at run-time in a program, as opposed to static data objects which are allocated at compile-time. cache and by its significantly lower overheads in access time, energy consumption, area and overall runtime. It is motivated by its better real-time guarantees vs.

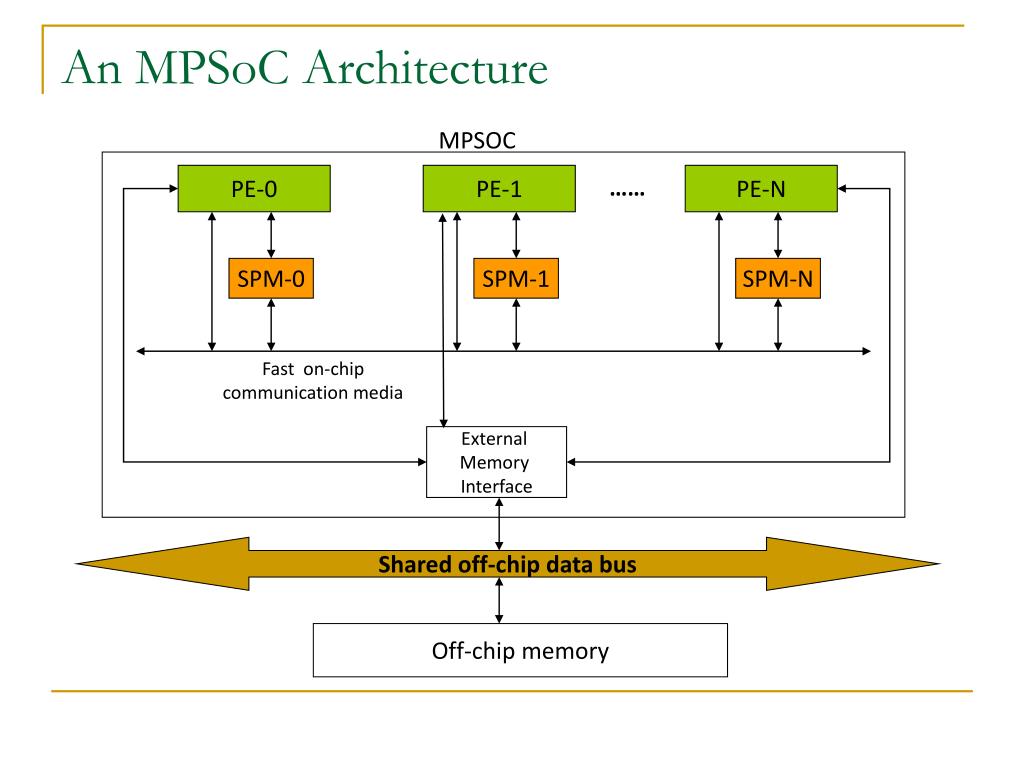

A scratch-pad is a fast directly addressed compiler-managed SRAM memory that replaces the hardware-managed cache.

This thesis presents the first-ever compile-time method for allocating a portion of a program's dynamic data to scratch-pad memory.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed